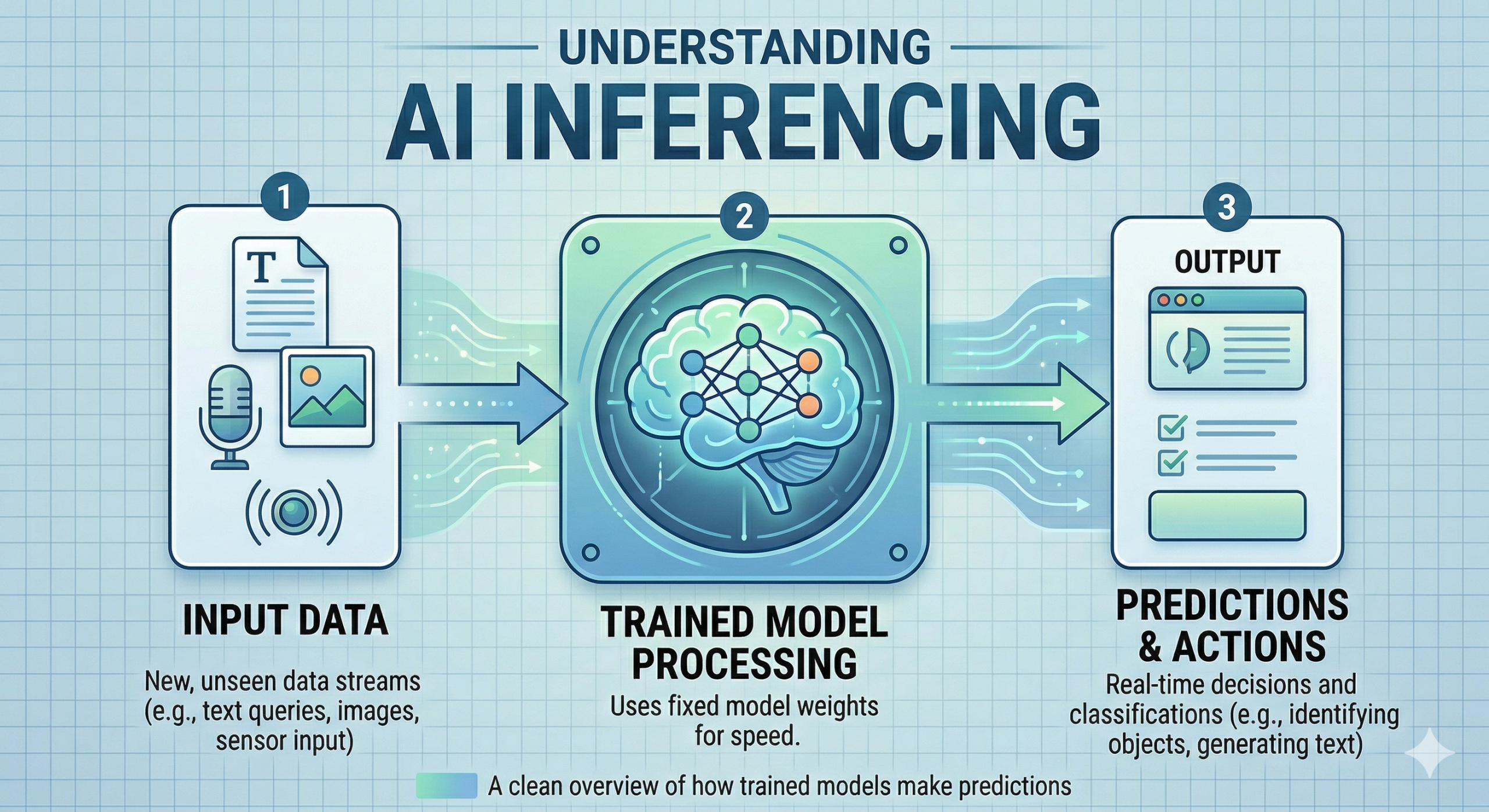

What is AI Inferencing?

Inferencing is the process of running live data through a trained AI model to make a prediction or solve a task. It’s the moment of truth for an AI model, testing how well it can apply information learned during training to make a prediction or solve a task.

The High Cost of Inferencing

Training an AI model can be expensive, but the cost of inferencing is even higher. Each time someone runs an AI model on their computer or mobile phone, there’s a cost in kilowatt hours, dollars, and carbon emissions. In fact, running a large AI model can put more carbon into the atmosphere over its lifetime than the average American car.

Faster AI Inferencing

To speed up inferencing, IBM and other tech companies have been investing in technologies such as:

- Developing more powerful computer chips optimized for matrix multiplication

- Shrinking models themselves through pruning and quantization

- Removing bottlenecks in middleware to accelerate inference

These innovations can significantly reduce the time it takes for AI models to make predictions or solve tasks, making them more practical and efficient for use in a variety of applications.

Low-Cost Inferencing for Hybrid Cloud

Middleware is essential for solving AI tasks. By optimizing the compiler and runtime, we can reduce the number of nodes in the communication graph and thus the number of round trips between a CPU and a GPU.

This optimization can be especially useful in hybrid cloud environments, where data is processed on-premises and then sent to the cloud for further processing or storage. By reducing the time it takes for AI models to make predictions or solve tasks, we can improve the overall efficiency of these systems.

Recent Improvements

Recently, IBM Research added three improvements to PyTorch:

- Graph fusion to reduce the number of nodes in the communication graph

- Kernel optimization that streamlines attention computation

- Parallel tensors that split the AI model’s computational graph into strategic chunks

These innovations have been shown to significantly improve the performance of AI models, allowing them to make predictions or solve tasks more quickly and accurately.

What’s Next?

To further boost inferencing speeds, IBM and PyTorch plan to add two more levers to the PyTorch runtime and compiler for increased throughput:

- Dynamic batching that allows the runtime to consolidate multiple user requests into a single batch

- Quantization that allows the compiler to run the computational graph at lower precision

These innovations will allow AI models to make predictions or solve tasks even faster, making them even more practical and efficient for use in a variety of applications.

Conclusion

AI inferencing is an essential part of many machine learning applications. By improving the speed and efficiency of AI models, we can unlock new possibilities for innovation and growth. Whether you’re a developer, researcher, or simply someone interested in the latest advancements in AI, I hope this article has provided valuable insights into the world of AI inferencing.

URL Reference: https://research.ibm.com/blog/AI-inference-explained